17/06/2021

Gendering in machine translation – how it works and what to watch out for

Gender-neutral language is a controversial topic, and not only in Germany. In the first two parts of our post, we made a distinction for Germany and ourselves and then took a look at gendering in other languages and countries. In the third and final part, we focus on machine translation, a trendy topic in the translation industry, and shed light on whether and how machines can be gender-neutral and which linguistic hurdles have to be overcome in the process.

Can gendering be done by machines?

When it comes to gender equality and gender-neutral language, the following very quickly becomes clear: there is no right or wrong; instead, there are definite opinions and, quite frequently, tangible disagreements too. While some see it as a linguistic frippery and an unnecessary discussion, others emphasize that discussing linguistic niceties does not solve real injustices. Advocates of gender-neutral language argue for more justice and for making everyone visible. Whether and to what extent language can or should be adapted, rearranged and partly regulated is thus a profoundly human and somewhat very personal issue. (See also Part 1: “Why oneword uses gender-neutral language, and others should too“)

But what happens when you involve artificial intelligence in this? This is especially relevant, since the discussion, including its human and emotional components, should be basically indifferent to a machine. For us as language service providers, machine translation (MT) is particularly interesting. It’s a procedure in which a text is transferred into a target language based on algorithms and trained language patterns. Depending on the MT system, this is based either on rules or on the text corpus. In other words: the machine “learns” a language either through predefined language rules or through matching and adopting patterns from existing translations.

But gender-neutral language is a fairly recent phenomenon, with few or no linguistic rules so far, and it is only just starting to be used in bodies of text. As a result, there is a wide range of possibilities for writing with gender neutrality: German uses an asterisk, underscore, colon, double mention or camel case, French has a middot and Spanish uses the characters x, e or @. (See also Part 2: “How other languages do gendering“)

So for translation, the question is: Can MT systems already recognize patterns when using gender neutrality and correctly transfer them into a target language? Our own extensive testing using various systems, gender symbols and languages shows that it depends on all three factors.

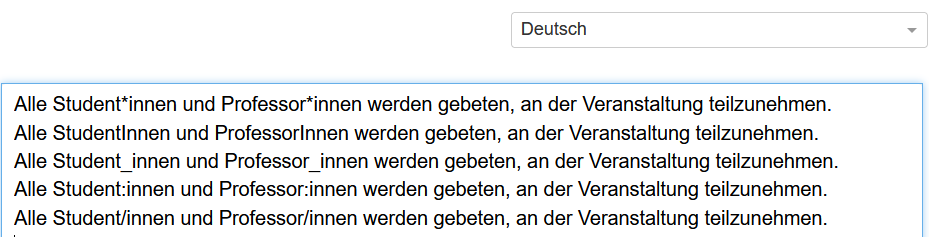

The machines recognize most of the gender symbols used in German and convert them. In other words: the text is recognized as coherent and, depending on the target language, transferred into a neutral or a generic masculine form. “Student*innen” therefore becomes “students” in English (gender-neutral) and “étudiants” in French (masculine). There is no transfer into a likewise counter-inclusive form in the target language.

There is, however, an error in the German use of the colon (Student:innen) in test translations into French: A colon in French always requires a space before and after the character. Since this rule is applied before the contextual reference, the word segment “:innen” is separated and translated as “intérieur” (“inside”). This machine behaviour is understandable, but it completely distorts the meaning of the target sentence. However, this error occurred in only one of the three MT systems tested:

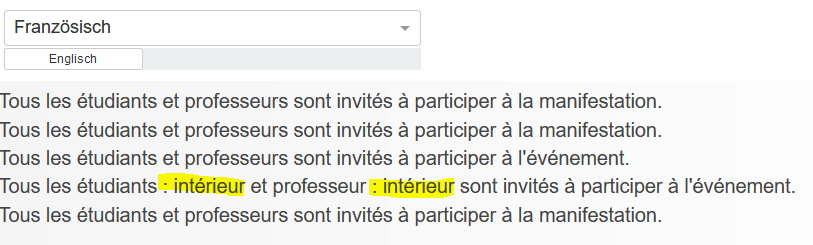

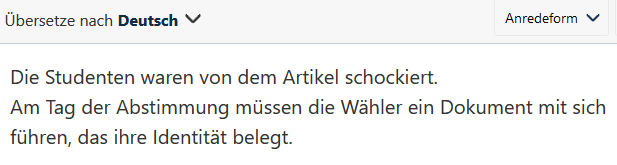

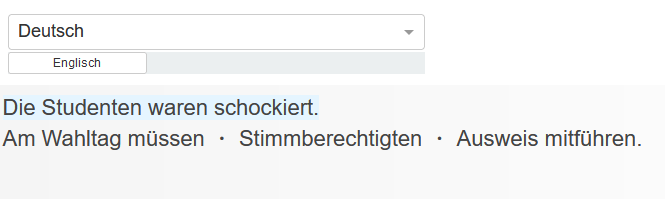

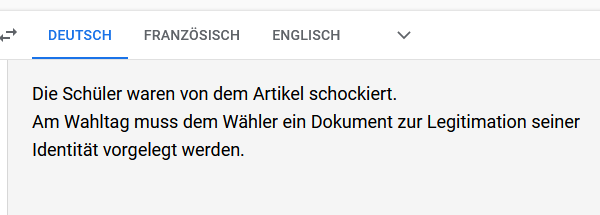

Most systems and languages recognize other examined gender symbols without any problems, but some misinterpret them. The implementation of the French middot, in which all word forms or endings are listed one after the other, separated by dots within the word, reveals the greatest linguistic problems: while system 1 recognizes and translates everything correctly, system 2 omits a part in the first sentence and only outputs the information cryptically and abbreviated in the second sentence. System 3 translates the first sentence correctly, but interprets the second sentence incorrectly, as it is not “the voter” who must be shown a document, but “the voters” who must carry a document.

System 1: Correct implementation

System 2: Both sentences incorrect

System 3: 1st sentence correct, 2nd sentence misinterpreted

When using machine translation for gendered texts, it is therefore important to select the MT system and to be aware of potential stumbling blocks. If a system is to be trained specifically for a company, the same applies as for terminology and style guidelines: the gender specifications must already be included in the training material and implemented consistently. In this case, the machine could also be trained to translate correctly into the desired gender form of the target language. Otherwise, as the tests show, gender patterns are recognized but then transferred to the much-discussed generic masculine form. Does this mean that artificial intelligence is perhaps siding with those who oppose gendering?

Can machines discriminate?

Not really, of course. The MT system does not “decide” to implement gendering, any more than it actively discriminates. The underlying algorithm collects and utilizes all available data and information of a language pair in order to recognize and reproduce language patterns on this basis. In this way, the machine translation reflects the currently established communication, including all traditional gender-specific distinctions and role attributions, but without evaluating them or actively deciding in favour of or against a particular linguistic implementation.

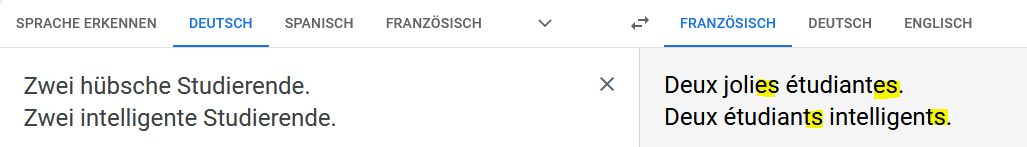

But is it then enough to simply say “it’s not the machine’s fault, that’s the way it learned it”? Our test results show the extent to which the machines reproduce classic role models and thus run deep inside the vein of the gender debate: if the German adjective “hübsch” (pretty) is added to a subject in a sentence (example: hübsche Studierende / pretty students), the result is usually feminine in gender-specific target languages, but masculine in the case of “intelligent”:

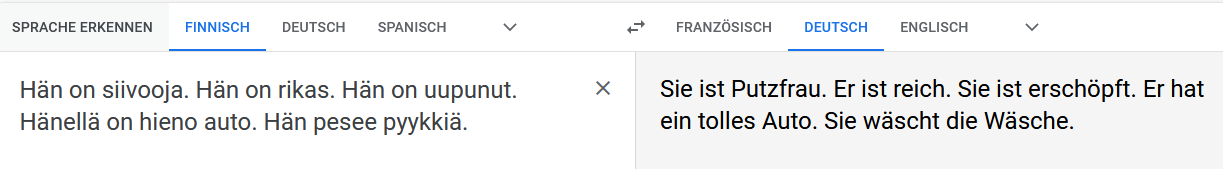

This bias becomes even more pronounced when gender is explicitly specified in a gender-sensitive source language, but this specification is undermined by stereotypes in the algorithm. The male nurse in a Spanish text (enfermero), which is “Krankenpfleger” in German, is usually translated in German as a female nurse “Krankenschwester”, whereas the German female boss “Chefin ” (jefa) is translated as the male boss “Chef”. These role models are also evident in activities and ownership, as an example from Finnish, a language with no distinction between “he” and “she”, shows:

[English translation of the German MT translation: “She is a charlady. He is rich. She is exhausted. He has a great car. She does the laundry.”]

The technically responsible mechanism is a so-called NEAR function, which assigns another word, such as a pronoun, to a word or a group of words purely on the basis of statistics.

What can actually be an advantage in machine translation, for example, when the machine meaningfully completes a missing verb, makes the socially shaped language habits clearly visible in the context of gender equality and the gender debate.

So it’s not the machine that discriminates in some way, it’s the embedded role attributions that are deep in our language and thus in the training texts of the algorithms. Perhaps this is one more argument in favour of breaking up these language patterns and also using gender-inclusive language to help make everyone in society more visible.

8 good reasons to choose oneword.

Learn more about what we do and what sets us apart from traditional translation agencies.

We explain 8 good reasons and more to choose oneword for a successful partnership.